tva: Tab-separated Values Assistant

Fast, reliable TSV processing toolkit in Rust.

Overview

tva (pronounced “Tee-Va”) is a high-performance command-line toolkit written in Rust for

processing tabular data. It brings the safety and speed of modern systems programming to the classic

Unix philosophy.

Inspiration

- eBay’s tsv-utils (discontinued): The primary reference for functionality and performance.

- GNU Datamash: Statistical operations.

- R’s tidyverse: Reshaping concepts and string manipulation.

- xan: DSL and terminal-based plotting.

Use Cases

- “Middle Data”: Files too large for Excel/Pandas but too small for distributed systems ( Spark/Hadoop).

- Data Pipelines: Robust CLI-based ETL steps compatible with

awk,sort, etc. - Exploration: Fast summary statistics, sampling, and filtering on raw data.

Design Principles

- Single Binary: A standalone executable with no dependencies, easy to deploy.

- Header Aware: Manipulate columns by name or index.

- Fail-fast: Strict validation ensures data integrity (no silent truncation).

- Streaming: Stateless processing designed for infinite streams and large files.

- TSV-first: Prioritizes the reliability and simplicity of tab-separated values.

- Performance: Single-pass execution with minimal memory overhead.

Installation

Current release: 0.3.2

# Clone the repository and install via cargo

cargo install --force --path .

Or install the pre-compiled binary via the cross-platform package manager cbp (supports older Linux systems with glibc 2.17+):

cbp install tva

You can also download the pre-compiled binaries from the Releases page.

Running Examples

The examples in the documentation use sample data located in the docs/data/ directory. To run

these examples yourself, we recommend cloning the repository:

git clone https://github.com/wang-q/tva.git

cd tva

Then you can run the commands exactly as shown in the docs (e.g.,

tva select -f 1 docs/data/input.csv).

Alternatively, you can download individual files from the docs/data directory on GitHub.

Commands

Subset Selection

Select specific rows or columns from your data.

select: Select and reorder columns.filter: Filter rows based on numeric, string, or regex conditions.slice: Slice rows by index (keep or drop). Supports multiple ranges and header preservation.sample: Randomly sample rows (Bernoulli, reservoir, weighted).

Data Transformation

Transform the structure or values of your data.

longer: Reshape wide to long (unpivot). Requires a header row.wider: Reshape long to wide (pivot). Supports aggregation via--op(sum, count, etc.).fill: Fill missing values in selected columns (down/LOCF, const).blank: Replace consecutive identical values in selected columns with empty strings ( sparsify).transpose: Swaps rows and columns (matrix transposition).

Expr Language

Expression-based transformations for complex data manipulation.

expr: Evaluate expressions and output results.extend: Add new columns to each row (alias forexpr -m extend).mutate: Modify existing column values (alias forexpr -m mutate).

Data Organization

Organize and combine multiple datasets.

sort: Sorts rows based on one or more key fields.reverse: Reverses the order of lines (liketac), optionally keeping the header at the top.join: Join two files based on common keys.append: Concatenate multiple TSV files, handling headers correctly.split: Split a file into multiple files (by size, key, or random).

Statistics & Summary

Calculate statistics and summarize your data.

stats: Calculate summary statistics (sum, mean, median, min, max, etc.) with grouping.bin: Discretize numeric values into bins (useful for histograms).uniq: Deduplicate rows or count unique occurrences (supports equivalence classes).

Visualization

Visualize your data in the terminal.

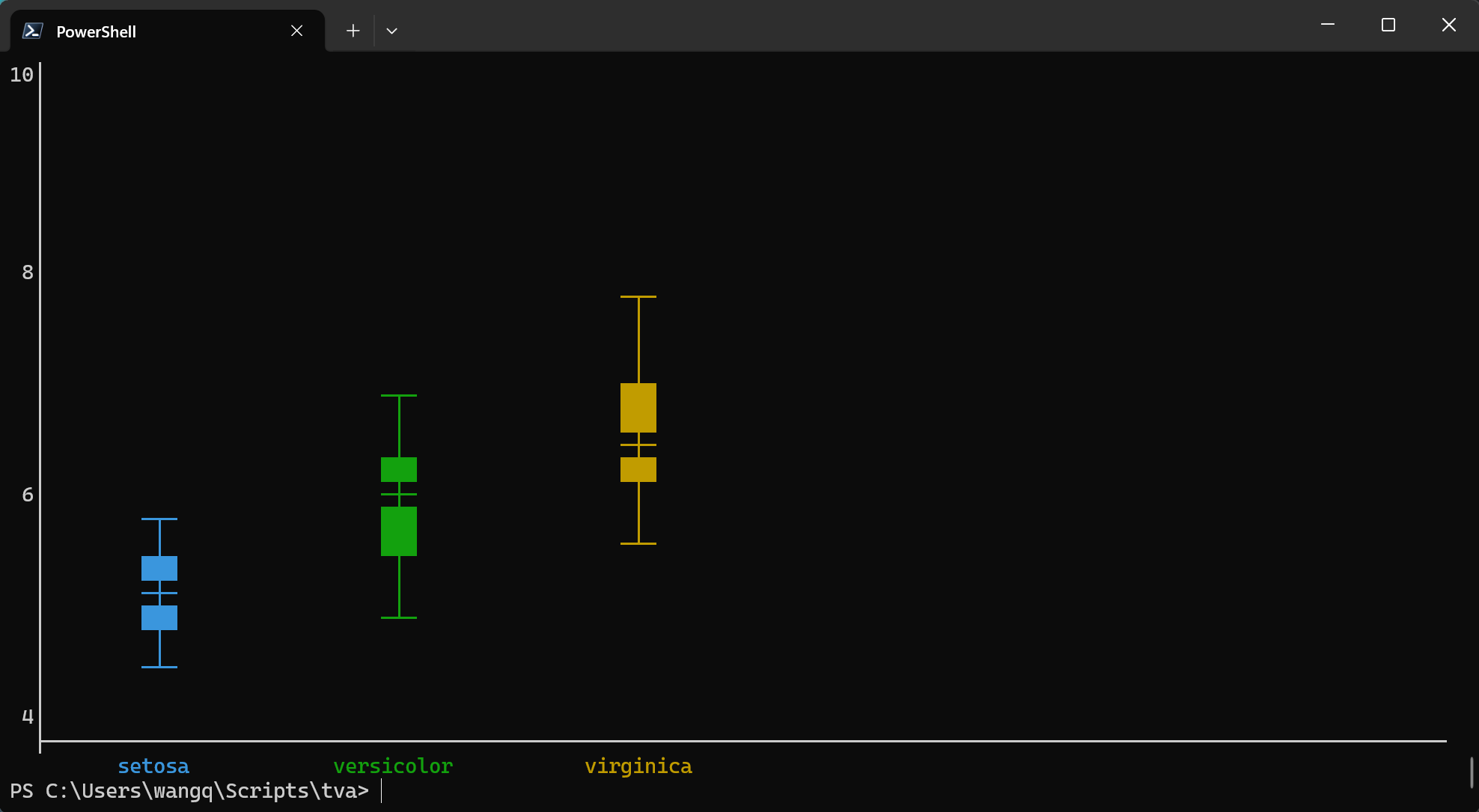

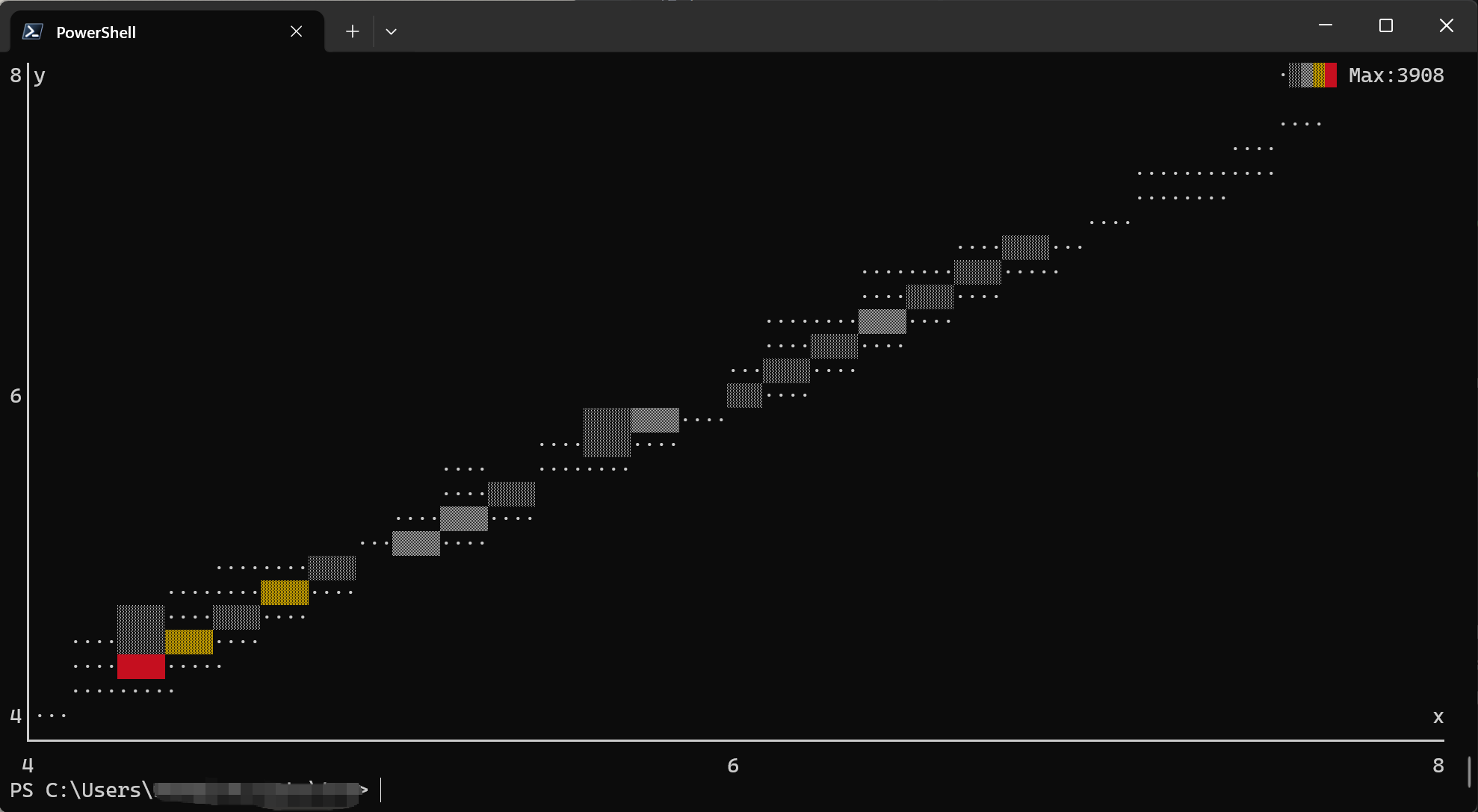

plot point: Draw scatter plots or line charts in the terminal.plot box: Draw box plots (box-and-whisker plots) in the terminal.plot bin2d: Draw 2D histograms/heatmaps in the terminal.

Formatting & Utilities

Format and validate your data.

check: Validate TSV file structure (column counts, encoding).nl: Add line numbers to rows.keep-header: Run a shell command on the body of a TSV file, preserving the header.

Import & Export

Convert data to and from TSV format.

from: Convert other formats to TSV (csv,xlsx,html).to: Convert TSV to other formats (csv,xlsx,md).

Author

Qiang Wang wang-q@outlook.com

License

MIT. Copyright by Qiang Wang.

tva Design

This document outlines the core design decisions behind tva, drawing inspiration from the

original TSV Utilities by eBay.

Why TSV?

The Tab-Separated Values (TSV) format is chosen over Comma-Separated Values (CSV) for several key reasons, especially in data mining and large-scale data processing contexts:

1. No Escapes = Reliability & Speed

- CSV Complexity: CSV uses escape characters (usually quotes) to handle delimiters (commas) and newlines within fields. Parsing this requires a state machine, which is slower and prone to errors in ad-hoc scripts.

- TSV Simplicity: TSV disallows tabs and newlines within fields. This means:

- Parsing is trivial:

split('\t')works reliably. - Record boundaries are clear: Every newline is a record separator.

- Performance: Highly optimized routines can be used to find delimiters.

- Robustness: No “malformed CSV” errors due to incorrect quoting.

- Parsing is trivial:

2. Unix Tool Compatibility

- Traditional Unix tools (

cut,awk,sort,join,uniq) work seamlessly with TSV files by specifying the delimiter (e.g.,cut -f1). - The CSV Problem: Standard Unix tools fail on CSV files with quoted fields or newlines. This

forces CSV toolkits (like

xsv) to re-implement standard operations (sorting, joining) just to handle parsing correctly. - The TSV Advantage:

tvaleverages the simplicity of TSV. Whiletvaprovides its ownsortandjoinfor header awareness and Windows support, the underlying data remains compatible with the vast ecosystem of standard Unix text processing tools.

Why Rust?

tva is implemented in Rust, differing from the original TSV Utilities (written in D).

1. Safety & Performance

- Memory Safety: Rust’s ownership model ensures memory safety without a garbage collector, crucial for high-performance data processing tools.

- Zero-Cost Abstractions: High-level constructs (iterators, closures) compile down to efficient machine code, often matching or beating C/C++.

- Predictable Performance: No GC pauses means consistent throughput for large datasets.

2. Cross-Platform & Deployment

- Single Binary: Rust compiles to a static binary with no runtime dependencies (unlike Python or Java).

- Windows Support: Rust has first-class support for Windows, making

tvaeasily deployable on non-Unix environments (a key differentiator from many Unix-centric tools).

Design Goals

1. Unix Philosophy

- Do One Thing Well: Each subcommand (

filter,select,stats) focuses on a specific task. - Pipeable: Tools read from stdin and write to stdout by default, enabling powerful pipelines:

tva filter --gt score:0.9 data.tsv | tva select name,score | tva sort -k score - Streaming: Stateless where possible to support infinite streams and large files.

2. Header Awareness

- Unlike generic Unix tools,

tvais aware of headers. - Field Selection: Columns can be selected by name (

--fields user_id) rather than just index. - Header Preservation: Operations like

filterorsampleautomatically preserve the header row.

3. TSV-first

- Default separator is TAB.

- Processing revolves around the “Row + Field” model.

- CSV is treated as an import format (

from-csv), but core logic is TSV-centric.

4. Explicit CLI & Fail-fast

- Options should be explicit (no “magic” behavior).

- Strict error handling: mismatched field counts or broken headers result in immediate error exit ( stderr + non-zero status), rather than silent truncation.

5. High Performance

- Aim for single-pass processing.

- Avoid unnecessary allocations and sorting.

6. Single-Threaded by Default

Core Philosophy: Single-threaded extreme performance + external parallel tools

tva adopts a single-threaded model for most data processing scenarios. This is not a

technical limitation, but an active choice based on Unix philosophy:

- Do One Thing Well:

tvafocuses on streaming data parsing, transformation, and statistics, leaving parallel scheduling complexity to specialized tools (like GNU Parallel). - Don’t Reinvent the Wheel: GNU Parallel is already a mature, powerful parallel task scheduler.

Rather than implementing complex thread pools and task distribution inside

tva, it’s better to maketvathe best partner for Parallel. - Determinism and Simplicity: Single-threaded models make data processing order naturally deterministic, debugging easier, and greatly reduce memory management complexity and overhead ( lock-free, zero-copy easier to achieve).

Implementation Details

tva adopts several optimization strategies similar to tsv-utils to ensure high performance:

1. Buffered I/O

- Input: Uses

std::io::BufReaderto minimize system calls when reading large files. Transparently handles.gzfiles (viaflate2). - Output: Uses

std::io::BufWriterto batch writes, significantly improving throughput for commands that produce large output.

2. Zero-Copy & Re-use

- String Reuse: Where possible,

tvareuses allocated string buffers (e.g., viaread_lineinto a cleared String) to avoid the overhead of repeated memory allocation and deallocation. - Iterator-Based Processing: Leverages Rust’s iterator lazy evaluation to process data line-by-line without loading entire files into memory, enabling processing of datasets larger than RAM.

Performance Architecture & Benchmarks

tva is built on a philosophy of “Zero-Copy” and “SIMD-First”. We continuously benchmark different

parsing strategies to ensure tva remains the fastest tool for TSV processing.

Parsing Strategy Evolution

We compared multiple parsing strategies to find the optimal balance between speed and correctness. The evolution shows a clear progression from naive implementations to hand-optimized SIMD:

- Naive Split → Memchr-based → Single-Pass SIMD → Hand-written SIMD

- Each step eliminates overhead: allocation, function calls, or redundant scanning.

Latest Benchmark Results

Test Data 1: Short Fields, Few Columns (5 cols, ~8 bytes/field)

| Implementation | Time | Throughput | Notes |

|---|---|---|---|

| TVA for_each_row | 43 µs | 1.63 GiB/s | Current: Hand-written SIMD (SSE2), true single-pass |

| simd-csv | 80 µs | 905 MiB/s | Hybrid SIMD state machine, previous ceiling |

| TVA for_each_line + memchr | 87 µs | 830 MiB/s | Two-pass: SIMD for lines, memchr for fields |

| Memchr Reused Buffer | 113 µs | 639 MiB/s | Line-by-line memchr, limited by function call overhead |

| csv crate | 130 µs | 556 MiB/s | Classic DFA state machine, correctness baseline |

| Naive Split | 562 µs | 129 MiB/s | Original implementation, slowest |

Test Data 2: Wide Rows, Many Columns (20 cols, ~6 bytes/field)

| Implementation | Time | Throughput | Notes |

|---|---|---|---|

| TVA for_each_row | 128 µs | 896 MiB/s | Current: Hand-written SIMD (SSE2), true single-pass |

| simd-csv | 180 µs | 635 MiB/s | Hybrid SIMD state machine |

| TVA for_each_line + memchr | 247 µs | 463 MiB/s | Two-pass: SIMD for lines, memchr for fields |

| Memchr Reused Buffer | 344 µs | 333 MiB/s | Line-by-line memchr |

| csv crate | 320 µs | 358 MiB/s | Classic DFA state machine |

| Naive Split | 1167 µs | 98 MiB/s | Original implementation |

Key Findings:

- Performance Leap:

for_each_rowachieves 1.63 GiB/s on short fields—1.8x faster thansimd-csvand 12.6x faster than naive split. On wide rows, it maintains 896 MiB/s, demonstrating consistent advantage across data shapes. - Single-Pass Wins: True single-pass scanning outperforms two-pass approaches by ~95% regardless of row width, as more delimiter searches are eliminated.

- Scalability: All implementations show expected throughput decrease on wide rows (more delimiters to process), but TVA’s single-pass approach maintains the lead.

TSV Parser Design

This section details the design of tva’s custom TSV parser, which leverages the simplicity of the

TSV format to achieve high performance.

Format Differences: CSV vs TSV

| Feature | CSV (RFC 4180) | TSV (Simple) | Impact |

|---|---|---|---|

| Delimiter | , (variable) | \t (fixed) | TSV can hardcode delimiter, enabling SIMD optimization. |

| Quotes | Supports " wrapping | Not supported | TSV eliminates “in_quote” state machine, removing branch misprediction. |

| Escapes | "" escapes quotes | None | TSV supports true zero-copy slicing without rewriting. |

| Newlines | Allowed in fields | Not allowed | TSV guarantees \n always means record end, enabling parallel chunking. |

Implementation

Architecture:

src/libs/tsv/simd/

├── mod.rs - DelimiterSearcher trait, platform abstraction

├── sse2.rs - x86_64 SSE2 implementation (128-bit vectors)

└── neon.rs - aarch64 NEON implementation (128-bit vectors)

Key Design Decisions:

- Hand-written SIMD: Platform-specific searchers simultaneously scan for

\tand\n, eliminating generic library overhead. - Single-Pass Scanning: All delimiter positions are found in one pass, storing field boundaries in a pre-allocated array. This eliminates the ~95% overhead of two-pass approaches.

- Unified CR Handling: Only

\tand\nare searched during SIMD scan. When\nis found, we check if the preceding byte is\r. This reduces register pressure compared to searching for three characters simultaneously. - Zero-Copy API:

TsvRowstructs yield borrowed slices into the internal buffer, eliminating per-row allocation.

Platform Support:

- x86_64: SSE2 intrinsics (baseline for all x86_64 CPUs)

- aarch64: NEON intrinsics (baseline for all ARM64 CPUs)

- Fallback:

memchr2for other platforms

Performance Validation

| Metric | Target | Achieved | Status |

|---|---|---|---|

| Throughput (short fields) | 2-3 GiB/s | 1.63 GiB/s | ✅ Near theoretical limit |

Speedup vs simd-csv | 1.5-2x | 1.8x | ✅ Exceeded target |

| Speedup vs memchr2 | 1.5-2x | 2.0x | ✅ Achieved target |

Key Insights:

- SSE2 over AVX2: 128-bit SSE2 outperformed 256-bit AVX2. Wider registers added overhead without proportional gains for TSV’s simple structure.

- Single-Pass Architecture: The dominant performance factor, providing ~95% improvement over two-pass approaches regardless of data shape.

Common Behavior & Syntax

tva tools share a consistent set of behaviors and syntax conventions, making them easy to learn

and combine.

Field Syntax

All tools use a unified syntax to identify fields (columns). See Field Syntax Documentation for details.

- Index:

1(first column),2(second column). - Range:

1-3(columns 1, 2, 3). - List:

1,3,5. - Name:

user_id(requires--header). - Wildcard:

user_*(matchesuser_id,user_name, etc.). - Exclusion:

--exclude 1,2(select all except 1 and 2).

Header Processing

- Input: Most tools accept a

--header(or-H) flag to indicate the first line of input is a header. This enables field selection by name.- Note: The

longerandwidercommands assume a header by default.

- Note: The

- Output: When

--headeris used,tvaensures the header is preserved in the output (unless explicitly suppressed). - No Header: Without this flag, the first row is treated as data. Field selection is limited to indices (no names).

- Multiple Files: If processing multiple files with

--header:- The header from the first file is written to output.

- Headers from subsequent files are skipped (assumed to be identical to the first).

- Validation: Field counts must be consistent;

tvafails immediately on jagged rows.

Multiple Files & Standard Input

- Standard Input: If no files are provided, or if

-is used as a filename,tvareads from standard input (stdin). - Concatenation: When multiple files are provided,

tvaprocesses them sequentially as a single continuous stream of data (logical concatenation).- Example:

tva filter --gt value:10 file1.tsv file2.tsvprocesses both files.

- Example:

Comparison with Other Tools

tva is designed to coexist with and complement other excellent open-source tools for tabular

data.It combines the strict, high-performance nature of tsv-utils with the cross-platform

accessibility and modern ecosystem of Rust.

| Feature | tva (Rust) | tsv-utils (D) | xsv / qsv (Rust) | datamash (C) |

|---|---|---|---|---|

| Primary Format | TSV (Strict) | TSV (Strict) | CSV (Flexible) | TSV (Default) |

| Escapes | No | No | Yes | No |

| Header Aware | Yes | Yes | Yes | Partial |

| Field Syntax | Names & Indices | Names & Indices | Names & Indices | Indices |

| Platform | Cross-platform | Unix-focused | Cross-platform | Unix-focused |

| Performance | High | High | High (CSV cost) | High |

Detailed Breakdown

- tsv-utils (D):

- The direct inspiration for

tva.tvaaims to be a Rust-based alternative that is easier to install (no D compiler needed) and extends functionality (e.g.,sample,slice).

- The direct inspiration for

- qsv (Rust):

- The premier tools for CSV processing.

- Because they must handle CSV escapes, they are inherently more complex than TSV-only tools.

- Use these if you must work with CSVs directly; use

tvaif you can convert to TSV for faster, simpler processing.

- GNU Datamash (C):

- Excellent for statistical operations (groupby, pivot) on TSV files.

tva statsis similar but adds header awareness and named field selection, making it friendlier for interactive use.

- Miller (mlr) (C):

- A powerful “awk for CSV/TSV/JSON”. Supports many formats and complex transformations.

- Miller is a DSL (Domain Specific Language);

tvafollows the “do one thing well” Unix philosophy with separate subcommands.

- csvkit (Python):

- Very feature-rich but slower due to Python overhead. Great for converting obscure formats ( XLSX, DBF) to CSV/TSV.

- GNU shuf (C):

- Standard tool for random permutations.

tva sampleadds specific data science sampling methods: weighted sampling (by column value) and Bernoulli sampling.

Aggregation Architecture

This section provides a deep dive into the architectural differences between tva and other tools

like xan (Rust) and tsv-utils (D Language) in their aggregation module designs.

tva: Runtime Polymorphism with SoA Memory Layout

Design: Hybrid Struct-of-Arrays (SoA). The Schema (StatsProcessor) builds the computation

graph at runtime, while the State (Aggregator) uses compact columnar storage (Vec<f64>,

Vec<String>). Computation logic is dynamically dispatched via Box<dyn Calculator> trait objects.

Advantages:

- Memory Efficient: Even with millions of groups, each group’s

Aggregatoroverhead is minimal (only a fewVecheaders). - Modular: Adding new operators only requires implementing the

Calculatortrait, completely decoupled from existing code. - Fast Compilation: Compared to generic/template bloat,

dyn Traitsignificantly reduces compile times and binary size. - Deterministic: Uses

IndexMapto guarantee that GroupBy output order matches the first-occurrence order in the input.

Trade-offs: Virtual function calls (vtable) have a tiny overhead compared to inlined code

in extremely high-frequency loops (e.g., 10 calls per row), but this is usually negligible in

I/O-bound CLI tools.

Other Tools

xan: Uses enum dispatch (enum Agg { Sum(SumState), ... }) to avoid heap allocation, but

requires modifying core enum definitions to add new operators.

tsv-utils (D): Uses compile-time template specialization for extreme performance, but has long compile times and high code complexity.

datamash (C): Uses sort-based grouping with O(1) memory, but requires pre-sorted input.

dplyr (R): Uses vectorized mask evaluation, but depends on columnar storage and is unsuitable for streaming.

Expr Language

TVA’s Expr language is designed for concise, shell-friendly data processing:

Source → Pest Parser → AST (Expr) → Direct Interpretation (eval)

↑______________________________↓

(Parse Cache)

Design Principles

- Conciseness: Short syntax for common operations (e.g.,

@1,@namefor column references). - Shell-friendly: Avoids conflicts with Shell special characters (

$,`,!). - Streaming: Row-by-row evaluation with no global state, suitable for big data.

- Type-aware: Recognizes numbers/dates when needed, treats data as strings by default for speed.

- Error Handling: Defaults to permissive mode (invalid operations return

null). - Consistency: Similar to jq/xan to reduce learning costs.

Expr Engine Optimizations

| Optimization | Technique | Speedup |

|---|---|---|

| Global Function Registry | OnceLock static registry | 35-57x |

| Parse Cache | HashMap<String, Expr> caching | 12x |

| Column Name Resolution | Compile-time name→index conversion | 3x |

| Constant Folding | Compile-time constant evaluation | 10x |

| HashMap (ahash) | Faster HashMap implementation | 6% |

Details:

- Parse caching: Expressions are parsed once and cached for all rows. Identical expressions reuse the cached AST.

- Column name resolution: When headers are available,

@namereferences are resolved to@indexat parse time for O(1) access. - Constant folding: Constant sub-expressions (e.g.,

2 + 3 * 4) are pre-computed during parsing. - Function registry: Built-in functions are looked up once and cached, avoiding repeated hash map lookups.

- Hash algorithm: Uses

ahashfor faster hash map operations.

For best performance, use column indices (@1, @2) instead of names.

性能基准测试计划

我们旨在重现 tsv-utils 使用的严格基准测试策略。

1. 基准工具

- tsv-utils (D): 主要性能对标目标。

- qsv (Rust): xsv 的活跃分支, 功能超级强大。

- GNU datamash (C): 统计操作的标准。

- GNU awk / mawk (C): 行过滤和基本处理的基准。

- csvtk (Go): 另一个现代跨平台工具包。

2. 测试数据集与策略

我们将使用不同规模的数据集来全面评估性能。

数据集来源

- HEPMASS (4.8GB): UCI Machine Learning Repository。

- 内容: 约 700 万行,29 列数值数据。

- 用途: 用于数值行过滤、列选择、统计摘要和文件连接测试。

- FIA Tree Data (2.7GB):

USDA Forest Service。

- 内容:

TREE_GRM_ESTN.csv的前 1400 万行,包含混合文本和数值。 - 用途: 用于正则行过滤和CSV 转 TSV测试。

- 内容:

测试策略

- 吞吐量与稳定性 (大文件):

- 使用完整的 GB 级数据集 (HEPMASS, FIA Tree Data)。

- 目标: 压力测试流处理能力、内存稳定性以及 I/O 吞吐量。

- 启动开销 (小文件):

- 使用HEPMASS_100k (~70MB, HEPMASS 的前 10 万行)。

- 目标: 测试工具的启动开销 (Startup Overhead) 和缓冲策略。 对于极短的运行时间,Rust/C 的启动时间差异会更明显。

3. 详细测试场景

为了确保公平和全面的对比,我们将执行以下具体场景(参考 tsv-utils 2017/2018):

- 数值行过滤 (Numeric Filter):

- 逻辑: 多列数值比较 (例如

col4 > 0.000025 && col16 > 0.3)。 - 基准:

tva filtervsawk(mawk/gawk) vstsv-filter(D) vsqsv search(Rust)。 - 目的: 测试数值解析和比较的效率。

- 逻辑: 多列数值比较 (例如

- 正则行过滤 (Regex Filter):

- 逻辑: 针对特定文本列的正则匹配 (例如

[RD].*(ION[0-2]))。 - 基准:

tva filter --regexvsgrep/awk/ripgrep(如果适用) vstsv-filtervsqsv search。 - 注意: 区分全行匹配与特定字段匹配。

- 逻辑: 针对特定文本列的正则匹配 (例如

- 列选择 (Column Selection):

- 逻辑: 提取分散的列 (例如 1, 8, 19)。

- 基准:

tva selectvscutvstsv-selectvsqsv selectvscsvtk cut。 - 注意: 测试不同文件大小。GNU

cut在小文件上通常非常快, 但在大文件上可能不如流式优化工具。 - 短行测试 (Short Lines): 针对海量短行数据(如 8600 万行, 1.7GB)进行测试, 主要考察每行处理的固定开销。

- 文件连接 (Join):

- 数据准备: 将大文件拆分为两个文件(例如: 左文件含列 1-15,右文件含列 1, 16-29),并 随机打乱行顺序, 但保留公共键(列 1)。

- 逻辑: 基于公共键将两个乱序文件重新连接。

- 基准:

tva joinvsjoin(Unix - 需先 sort) vsqsv joinvstsv-joinvscsvtk join。 - 目的: 测试哈希表构建和查找的内存与速度平衡。

- 统计摘要 (Summary Statistics):

- 逻辑: 计算多个列的 Count, Sum, Min, Max, Mean, Stdev。

- 基准:

tva statsvsdatamashvstsv-summarizevsqsv statsvscsvtk summary。

- CSV 转 TSV (CSV to TSV):

- 逻辑: 处理包含转义字符和嵌入换行符的复杂 CSV。

- 基准:

tva from csvvsqsv fmtvscsvtk csv2tabvscsv2tsv(tsv-utils)。 - 目的: 这是一个高计算密集型任务,测试 CSV 解析器的性能。

- 加权随机采样 (Weighted Sampling):

- 逻辑: 基于权重列进行加权随机采样 (Weighted Reservoir Sampling)。

- 基准:

tva sample --weightvstsv-samplevsqsv sample(如果支持)。 - 目的: 测试复杂算法与 I/O 的结合效率。

- 去重 (Deduplication):

- 逻辑: 基于特定列进行哈希去重。

- 基准:

tva uniqvstsv-uniqvsawkvssort | uniq。 - 目的: 测试哈希表性能和内存管理。

- 排序 (Sorting):

- 逻辑: 基于数值列进行排序。

- 基准:

tva sortvssort(GNU) vstsv-sort。 - 目的: 测试外部排序算法和内存使用。

- 切片 (Slicing):

- 逻辑: 提取文件中间的大段行 (如第 100 万 到 200 万 行)。

- 基准:

tva slicevssedvstail | head。 - 目的: 测试快速跳过行的能力。

- 反转 (Reverse):

- 逻辑: 反转整个文件的行序。

- 基准:

tva reversevstac。

- 追加 (Append):

- 逻辑: 连接多个大文件。

- 基准:

tva appendvscat。

- 导出 CSV (Export to CSV):

- 逻辑: 将 TSV 转换为标准 CSV (处理转义)。

- 基准:

tva to csvvsqsv fmt。

4. 执行环境与记录

- 硬件记录: 必须记录 CPU 型号、核心数、RAM 大小以及磁盘类型 (NVMe SSD 对 I/O 密集型测试影响巨大)。

- 软件版本:

- Rust 编译器版本 (

rustc --version)。 - 所有对比工具的版本 (

qsv --version,awk --version等)。

- Rust 编译器版本 (

- 预热 (Warmup): 使用

hyperfine --warmup确保文件系统缓存处于一致状态( 通常是热缓存状态)。

5. 执行工作流示例

我们将使用内联 Bash 脚本与 hyperfine 结合,实现完全自动化的基准测试。

# 1. 数据准备 (Data Preparation)

# ------------------------------

# 下载并解压 HEPMASS (如果不存在)

if [ ! -f "hepmass.tsv" ]; then

echo "Downloading HEPMASS dataset..."

curl -O https://archive.ics.uci.edu/ml/machine-learning-databases/00347/all_train.csv.gz

gzip -d all_train.csv.gz

# 转换为 TSV

tva from csv all_train.csv > hepmass.tsv

fi

# 准备 Join 测试数据 (拆分并乱序)

if [ ! -f "hepmass_left.tsv" ]; then

echo "Preparing Join datasets..."

# 添加行号作为唯一键

tva nl -H --header-string "row_id" hepmass.tsv > hepmass_numbered.tsv

# 拆分并打乱

tva select -f 1-16 hepmass_numbered.tsv | tva sample -H > hepmass_left.tsv

tva select -f 1,17-30 hepmass_numbered.tsv | tva sample -H > hepmass_right.tsv

rm hepmass_numbered.tsv

fi

# 2. 运行基准测试 (Run Benchmark)

# ------------------------------

echo "Running Benchmarks..."

# Scenario 1: Numeric Filter

hyperfine \

--warmup 3 \

--min-runs 5 \

--export-csv benchmark_filter.csv \

-n "tva filter" "tva filter -H --gt 1:0.5 hepmass.tsv > /dev/null" \

-n "tsv-filter" "tsv-filter -H --gt 1:0.5 hepmass.tsv > /dev/null" \

-n "awk" "awk -F '\t' '\$1 > 0.5' hepmass.tsv > /dev/null"

# Scenario 2: Column Selection

hyperfine \

--warmup 3 \

--min-runs 5 \

--export-csv benchmark_select.csv \

-n "tva select" "tva select -f 1,8,19 hepmass.tsv > /dev/null" \

-n "tsv-select" "tsv-select -f 1,8,19 hepmass.tsv > /dev/null" \

-n "cut" "cut -f 1,8,19 hepmass.tsv > /dev/null"

# Scenario 3: Join

hyperfine \

--warmup 3 \

--min-runs 5 \

--export-csv benchmark_join.csv \

-n "tva join" "tva join -H -f hepmass_right.tsv -k 1 hepmass_left.tsv > /dev/null" \

-n "tsv-join" "tsv-join -H -f hepmass_right.tsv -k 1 hepmass_left.tsv > /dev/null" \

-n "xan join" "xan join -d '\t' --semi row_id hepmass_left.tsv row_id hepmass_right.tsv > /dev/null"

# qsv join is too slow

# "qsv join row_id hepmass_left.tsv row_id hepmass_right.tsv > /dev/null"

# Scenario 4: Summary Statistics

hyperfine \

--warmup 3 \

--min-runs 5 \

--export-csv benchmark_stats.csv \

-n "tva stats" "tva stats -H --count --sum 3,5,20 --min 3,5,20 --max 3,5,20 --mean 3,5,20 --stdev 3,5,20 hepmass.tsv > /dev/null" \

-n "tsv-summarize" "tsv-summarize -H --count --sum 3,5,20 --min 3,5,20 --max 3,5,20 --mean 3,5,20 --stdev 3,5,20 hepmass.tsv > /dev/null"

# Scenario 5: Weighted Sampling (k=1000)

# Assumes column 5 is a suitable weight (positive float)

hyperfine \

--warmup 3 \

--min-runs 5 \

--export-csv benchmark_sample.csv \

-n "tva sample" "tva sample -H --weight-field 5 -n 1000 hepmass.tsv > /dev/null" \

-n "tsv-sample" "tsv-sample -H --weight-field 5 -n 1000 hepmass.tsv > /dev/null"

# Scenario 6: Uniq (Hash-based Deduplication)

hyperfine \

--warmup 3 \

--min-runs 5 \

--export-csv benchmark_uniq.csv \

-n "tva uniq" "tva uniq -H -f 1 hepmass.tsv > /dev/null" \

-n "tsv-uniq" "tsv-uniq -H -f 1 hepmass.tsv > /dev/null"

# Scenario 8: Slice (Middle of file)

hyperfine \

--warmup 3 \

--min-runs 5 \

--export-csv benchmark_slice.csv \

-n "tva slice" "tva slice -r 1000000-2000000 hepmass.tsv > /dev/null" \

-n "sed" "sed -n '1000000,2000000p' hepmass.tsv > /dev/null"

7. expr 对比 专用命令

使用 docs/data/diamonds.tsv

filter

hyperfine \

--warmup 3 \

--min-runs 50 \

--export-markdown tva_filter.tmp.md \

-n "tsv-filter" "tsv-filter -H --gt carat:1 --str-eq cut:Premium --lt price:3000 docs/data/diamonds.tsv > /dev/null" \

-n "xan filter" "xan filter 'carat > 1 and cut eq \"Premium\" and price < 3000' docs/data/diamonds.tsv > /dev/null" \

-n "tva expr -m skip-null" "tva expr -H -m skip-null -E 'if(@carat > 1 and @cut eq q(Premium) and @price < 3000, @0, null)' docs/data/diamonds.tsv > /dev/null" \

-n "tva expr -m filter" "tva expr -H -m filter -E '@carat > 1 and @cut eq q(Premium) and @price < 3000' docs/data/diamonds.tsv > /dev/null" \

-n "tva filter" "tva filter -H --gt carat:1 --str-eq cut:Premium --lt price:3000 docs/data/diamonds.tsv > /dev/null"

| Command | Mean [ms] | Min [ms] | Max [ms] | Relative |

|---|---|---|---|---|

tsv-filter | 21.0 ± 1.2 | 18.8 | 24.0 | 1.00 |

xan filter | 63.3 ± 2.2 | 59.9 | 73.8 | 3.01 ± 0.20 |

tva expr -m skip-null | 54.5 ± 3.0 | 50.7 | 68.6 | 2.59 ± 0.21 |

tva expr -m filter | 42.3 ± 2.2 | 39.5 | 53.9 | 2.01 ± 0.16 |

tva filter | 21.0 ± 1.6 | 18.8 | 31.2 | 1.00 ± 0.10 |

select

hyperfine \

--warmup 3 \

--min-runs 50 \

--export-markdown tva_select.tmp.md \

-n "tsv-select" "tsv-select -H -f carat,cut,price docs/data/diamonds.tsv > /dev/null" \

-n "xan select" "xan select 'carat,cut,price' docs/data/diamonds.tsv > /dev/null" \

-n "xan select -e" "xan select -e '[carat, cut, price]' docs/data/diamonds.tsv > /dev/null" \

-n "tva expr -m eval" "tva expr -H -m eval -E '[@carat, @cut, @price]' docs/data/diamonds.tsv > /dev/null" \

-n "tva select" "tva select -H -f carat,cut,price docs/data/diamonds.tsv > /dev/null"

| Command | Mean [ms] | Min [ms] | Max [ms] | Relative |

|---|---|---|---|---|

tsv-select | 21.0 ± 1.2 | 18.6 | 24.6 | 1.03 ± 0.09 |

xan select | 58.8 ± 2.7 | 54.4 | 72.5 | 2.87 ± 0.23 |

xan select -e | 69.2 ± 1.8 | 65.8 | 73.2 | 3.38 ± 0.24 |

tva expr -m eval | 57.3 ± 2.7 | 53.8 | 68.3 | 2.80 ± 0.22 |

tva select | 20.5 ± 1.3 | 17.6 | 24.5 | 1.00 |

reverse

hyperfine \

--warmup 3 \

--min-runs 50 \

--export-markdown tva_reverse.tmp.md \

-n "tva reverse" "tva reverse docs/data/diamonds.tsv > /dev/null" \

-n "tva reverse -H" "tva reverse -H docs/data/diamonds.tsv > /dev/null" \

-n "tva reverse --no-mmap" "tva reverse --no-mmap docs/data/diamonds.tsv > /dev/null" \

-n "tac" "tac docs/data/diamonds.tsv > /dev/null"

| Command | Mean [ms] | Min [ms] | Max [ms] | Relative |

|---|---|---|---|---|

tva reverse | 92.0 ± 3.2 | 86.0 | 103.1 | 5.28 ± 0.39 |

tva reverse -H | 94.6 ± 5.2 | 88.6 | 116.8 | 5.43 ± 0.46 |

tva reverse --no-mmap | 17.4 ± 1.1 | 14.6 | 21.6 | 1.00 |

tac | 50.2 ± 3.0 | 47.1 | 66.9 | 2.88 ± 0.26 |

keep-header -- tac | 56.7 ± 3.2 | 52.9 | 69.3 | 3.25 ± 0.28 |

tva reverse 的基准测试显示了一个反直觉的结果:

分析:

- mmap 模式比

--no-mmap慢5.3 倍 - 甚至低于

tac(2.88x)

原因:

- 页缓存预读失效: Linux 内核的预读机制优化顺序读取,反向扫描破坏预读策略

- TLB 抖动: 随机访问模式导致页表遍历开销增加

- 缺页中断: 小文件(5MB)完全适合内存,

read_to_end一次性读入后连续访问更缓存友好

代码层面:

#![allow(unused)]

fn main() {

// mmap 模式: 反向迭代触发随机访问

for i in memrchr_iter(b'\n', slice) { // 反向查找换行符

writer.write_all(&slice[i + 1..following_line_start])?;

}

// --no-mmap 模式: Vec<u8> 连续存储,CPU 缓存友好

let mut buf = Vec::new();

f.read_to_end(&mut buf)?; // 一次性读入

}启示: 对于小文件(<100MB)或反向/随机访问模式,--no-mmap 显著优于 mmap。

uniq

hyperfine \

--warmup 3 \

--min-runs 50 \

--export-markdown tva_uniq.tmp.md \

-n "tsv-uniq -f carat" "tsv-uniq -H -f carat docs/data/diamonds.tsv > /dev/null" \

-n "tsv-uniq -f 1" "tsv-uniq -H -f 1 docs/data/diamonds.tsv > /dev/null" \

-n "tva uniq -f carat" "tva uniq -H -f carat docs/data/diamonds.tsv > /dev/null" \

-n "tva uniq -f 1" "tva uniq -H -f 1 docs/data/diamonds.tsv > /dev/null" \

-n "cut sort uniq" "cut -f 1 docs/data/diamonds.tsv | sort | uniq > /dev/null" \

-n "tsv-uniq" "tsv-uniq docs/data/diamonds.tsv > /dev/null" \

-n "tva uniq" "tva uniq docs/data/diamonds.tsv > /dev/null" \

-n "sort uniq" "sort docs/data/diamonds.tsv | uniq > /dev/null"

| Command | Mean [ms] | Min [ms] | Max [ms] | Relative |

|---|---|---|---|---|

tsv-uniq -f carat | 35.5 ± 11.3 | 23.9 | 64.8 | 1.00 |

tsv-uniq -f 1 | 37.3 ± 11.5 | 26.7 | 86.5 | 1.05 ± 0.46 |

tva uniq -f carat | 41.3 ± 13.2 | 23.4 | 91.9 | 1.16 ± 0.52 |

tva uniq -f 1 | 44.7 ± 10.5 | 26.4 | 74.1 | 1.26 ± 0.50 |

cut sort uniq | 175.8 ± 42.4 | 138.4 | 311.1 | 4.96 ± 1.97 |

tsv-uniq | 64.4 ± 17.8 | 41.4 | 103.0 | 1.81 ± 0.76 |

tva uniq | 44.2 ± 6.7 | 30.9 | 63.3 | 1.25 ± 0.44 |

sort uniq | 59.2 ± 11.5 | 47.8 | 96.4 | 1.67 ± 0.62 |

append

hyperfine \

--warmup 3 \

--min-runs 50 \

--export-markdown tva_append.tmp.md \

-n "tsv-append" "tsv-append docs/data/diamonds.tsv docs/data/diamonds.tsv > /dev/null" \

-n "tva append" "tva append docs/data/diamonds.tsv docs/data/diamonds.tsv > /dev/null" \

-n "cat" "cat docs/data/diamonds.tsv docs/data/diamonds.tsv > /dev/null"

| Command | Mean [ms] | Min [ms] | Max [ms] | Relative |

|---|---|---|---|---|

tsv-append | 34.3 ± 3.0 | 30.4 | 47.9 | 1.12 ± 0.10 |

tva append | 33.8 ± 1.7 | 31.0 | 38.0 | 1.11 ± 0.06 |

cat | 30.5 ± 0.9 | 28.4 | 33.3 | 1.00 |

sort

hyperfine \

--warmup 3 \

--min-runs 50 \

--export-markdown tva_sort.tmp.md \

-n "tva sort -k 2" "tva sort -H -k 2 docs/data/diamonds.tsv > /dev/null" \

-n "sort -k 2" "sort -k 2 docs/data/diamonds.tsv > /dev/null" \

-n "tva sort" "tva sort docs/data/diamonds.tsv > /dev/null" \

-n "sort" "sort docs/data/diamonds.tsv > /dev/null"

| Command | Mean [ms] | Min [ms] | Max [ms] | Relative |

|---|---|---|---|---|

tva sort | 37.6 ± 3.5 | 30.8 | 48.9 | 1.00 |

sort | 39.5 ± 3.3 | 33.7 | 50.2 | 1.05 ± 0.13 |

keep-header -- sort | 42.8 ± 3.6 | 38.6 | 61.0 | 1.14 ± 0.14 |

tva keep-header -- sort | 74.0 ± 3.3 | 68.8 | 85.7 | 1.97 ± 0.20 |

keep-header

hyperfine \

--warmup 3 \

--min-runs 50 \

--export-markdown tva_keep-header.tmp.md \

-n "sort" "sort docs/data/diamonds.tsv > /dev/null" \

-n "keep-header -- sort" "keep-header docs/data/diamonds.tsv -- sort > /dev/null" \

-n "tva keep-header -- sort" "tva keep-header docs/data/diamonds.tsv -- sort > /dev/null" \

-n "tac" "tac docs/data/diamonds.tsv > /dev/null" \

-n "keep-header -- tac" "keep-header docs/data/diamonds.tsv -- tac > /dev/null" \

-n "tva keep-header -- tac" "tva keep-header docs/data/diamonds.tsv -- tac > /dev/null"

| Command | Mean [ms] | Min [ms] | Max [ms] | Relative |

|---|---|---|---|---|

sort | 32.7 ± 1.6 | 29.6 | 37.9 | 1.32 ± 0.12 |

keep-header -- sort | 35.3 ± 2.1 | 33.0 | 46.6 | 1.42 ± 0.14 |

tva keep-header -- sort | 36.4 ± 1.8 | 31.8 | 43.5 | 1.46 ± 0.13 |

tac | 45.8 ± 1.0 | 43.6 | 48.2 | 1.84 ± 0.15 |

keep-header -- tac | 24.9 ± 1.9 | 22.7 | 35.3 | 1.00 |

tva keep-header -- tac | 26.8 ± 1.9 | 23.5 | 38.6 | 1.08 ± 0.11 |

Selection & Filtering Documentation

This document explains how to use the selection, filtering, and sampling commands in tva:

select, filter, slice, and sample. These commands allow you to subset your

data based on structure (columns), values (rows), position (index), or randomly.

Introduction

Data analysis often begins with selecting the relevant subset of data:

select: Selects and reorders columns (e.g., “keep onlynameandemail”).filter: Selects rows where a condition is true (e.g., “keep rows whereage > 30”).slice: Selects rows by their position (index) in the file (e.g., “keep rows 10-20”).sample: Randomly selects a subset of rows.

Field Syntax

All tools use a unified syntax to identify fields (columns). See Field Syntax Documentation for details.

select (Column Selection)

The select command allows you to keep only specific columns and reorder them.

Basic Usage

tva select [input_files...] --fields <columns>

--fields/-f: Comma-separated list of columns to select.- Names:

name,email - Indices:

1,3(1-based) - Ranges:

1-3,start_col-end_col - Wildcards:

user_*,*_id

- Names:

Examples

1. Select by Name and Index

Consider the dataset docs/data/us_rent_income.tsv:

GEOID NAME variable estimate moe

01 Alabama income 24476 136

01 Alabama rent 747 3

02 Alaska income 32940 508

...

To keep only the state name (NAME) and the estimate value (estimate):

tva select docs/data/us_rent_income.tsv -f NAME,estimate

Output:

NAME estimate

Alabama 24476

Alabama 747

Alaska 32940

...

2. Reorder Columns

You can change the order of columns. Let’s move variable to the first column:

tva select docs/data/us_rent_income.tsv -f variable,estimate,NAME

Output:

variable estimate NAME

income 24476 Alabama

rent 747 Alabama

income 32940 Alaska

...

3. Select by Range and Wildcard

Consider docs/data/billboard.tsv which has many week columns (wk1, wk2, wk3…):

artist track wk1 wk2 wk3

2 Pac Baby Don't Cry 87 82 72

2Ge+her The Hardest Part 91 87 92

To select the artist, track, and all week columns:

tva select docs/data/billboard.tsv -f artist,track,wk*

Or using a range (if you know the indices):

tva select docs/data/billboard.tsv -f 1-2,3-5

filter (Row Filtering)

The filter command selects rows where a condition is true. It supports field-based tests,

expressions, empty/blank checks, and field-to-field comparisons.

Basic Usage

tva filter [input_files...] [options]

Filter tests can be combined (default is AND logic, use --or for OR logic).

Filter Types

1. Expression Filter

Use the -E option to filter with an expression:

tva filter docs/data/us_rent_income.tsv -H -E '@estimate > 30000'

2. Empty/Blank Checks

--empty <field>: True if the field is empty (no characters)--not-empty <field>: True if the field is not empty--blank <field>: True if the field is empty or all whitespace--not-blank <field>: True if the field contains a non-whitespace character

tva filter docs/data/us_rent_income.tsv --not-empty NAME

3. Numeric Comparison

Format: --<op> <field>:<value>

--eq,--ne,--gt,--ge,--lt,--le

tva filter docs/data/us_rent_income.tsv --gt estimate:30000

Output:

GEOID NAME variable estimate moe

02 Alaska income 32940 508

04 Arizona income 31614 242

06 California income 33095 172

...

4. String Comparison

--str-eq,--str-ne: String equality/inequality--str-gt,--str-ge,--str-lt,--str-le: String ordering--istr-eq,--istr-ne: Case-insensitive string comparison--str-in-fld,--str-not-in-fld: Substring test--istr-in-fld,--istr-not-in-fld: Case-insensitive substring test

tva filter docs/data/us_rent_income.tsv --str-eq variable:rent

Output:

GEOID NAME variable estimate moe

01 Alabama rent 747 3

02 Alaska rent 1200 13

04 Arizona rent 976 4

...

5. Regular Expression

--regex <field>:<pattern>: Field matches regex--iregex <field>:<pattern>: Case-insensitive regex match--not-regex <field>:<pattern>: Field does not match regex--not-iregex <field>:<pattern>: Case-insensitive non-match

tva filter docs/data/billboard.tsv --regex track:"Baby"

Output:

artist track wk1 wk2 wk3

2 Pac Baby Don't Cry 87 82 72

Beenie Man Girls Dem Sugar 87 70 63

...

6. Length Comparison

--char-len-eq,--char-len-ne,--char-len-gt,--char-len-ge,--char-len-lt,--char-len-le: Character length--byte-len-eq,--byte-len-ne,--byte-len-gt,--byte-len-ge,--byte-len-lt,--byte-len-le: Byte length

tva filter docs/data/billboard.tsv --char-len-gt track:10

7. Field Type Checks

--is-numeric <field>: True if field can be parsed as a number--is-finite <field>: True if field is numeric and finite--is-nan <field>: True if field is NaN--is-infinity <field>: True if field is positive or negative infinity

tva filter docs/data/us_rent_income.tsv --is-numeric estimate

8. Field-to-Field Comparison

--ff-eq,--ff-ne,--ff-lt,--ff-le,--ff-gt,--ff-ge: Numeric field-to-field--ff-str-eq,--ff-str-ne: String field-to-field--ff-istr-eq,--ff-istr-ne: Case-insensitive string field-to-field--ff-absdiff-le <f1>:<f2>:<num>: Absolute difference <= NUM--ff-absdiff-gt <f1>:<f2>:<num>: Absolute difference > NUM--ff-reldiff-le <f1>:<f2>:<num>: Relative difference <= NUM--ff-reldiff-gt <f1>:<f2>:<num>: Relative difference > NUM

tva filter docs/data/us_rent_income.tsv --ff-gt estimate:moe

Common Options

--or: Evaluate tests as OR instead of AND-v,--invert: Invert the filter, selecting non-matching rows-c,--count: Print only the count of matching data rows--label <header>: Label matched records instead of filtering (outputs 1/0)--label-values <pass:fail>: Custom values for –label (default: 1:0)

slice (Row Selection by Index)

The slice command selects rows based on their integer index (position). Indices are 1-based.

Basic Usage

tva slice [input_files...] --rows <range> [options]

--rows/-r: The range of rows to keep (e.g.,1-10,5,100-). Can be specified multiple times.--invert/-v: Invert selection (drop the specified rows).--header/-H: Always preserve the first row (header).

Examples

1. Keep Specific Range (Head/Body)

To inspect the first 5 rows of docs/data/billboard.tsv (including header):

tva slice docs/data/billboard.tsv -r 1-5

Output:

artist track wk1 wk2 wk3

2 Pac Baby Don't Cry 87 82 72

2Ge+her The Hardest Part 91 87 92

...

2. Drop Header (Data Only)

Sometimes you want to process data without the header. You can drop the first row using --invert:

tva slice docs/data/billboard.tsv -r 1 --invert

Output:

2 Pac Baby Don't Cry 87 82 72

2Ge+her The Hardest Part 91 87 92

...

3. Keep Header and Specific Data Rows

To keep the header (row 1) and a slice of data from the middle (rows 10-15), use the -H flag:

tva slice docs/data/us_rent_income.tsv -H -r 10-15

This ensures the first line is always printed, even if it’s not in the range 10-15.

sample (Random Sampling)

The sample command randomly selects a subset of rows. This is useful for exploring large datasets.

Basic Usage

tva sample [input_files...] [options]

--rate/-r: Sampling rate (probability 0.0-1.0). (Bernoulli sampling)--n/-n: Exact number of rows to sample. (Reservoir sampling)--seed/-s: Random seed for reproducibility.

Examples

1. Sample by Rate

To keep approximately 10% of the rows from docs/data/us_rent_income.tsv:

tva sample docs/data/us_rent_income.tsv -r 0.1

2. Sample Exact Number

To pick exactly 5 random rows for inspection:

tva sample docs/data/us_rent_income.tsv -n 5

Output (example):

GEOID NAME variable estimate moe

35 New Mexico rent 809 11

55 Wisconsin income 32018 247

18 Indiana rent 782 5

...

Data Transformation Documentation

This document explains how to use the data transformation commands in tva: longer, *

*wider**, fill, blank, and transpose. These commands allow you to reshape and

restructure your data.

Introduction

Data transformation involves changing the structure or values of a dataset. tva provides tools

for:

- Pivoting:

longer: Reshapes “wide” data (many columns) into “long” data (many rows).wider: Reshapes “long” data into “wide” data.

- Completion:

fill: Fills missing values with previous non-missing values (LOCF) or constants.blank: The inverse offill; replaces repeated values with empty strings (sparsify).

- Transposition:

transpose: Swaps rows and columns (matrix transposition).

longer (Wide to Long)

The longer command is designed to reshape “wide” data into a “long” format. “Wide” data often has

column names that are actually values of a variable. For example, a table might have columns like

2020, 2021, 2022 representing years. longer gathers these columns into a pair of key-value

columns (e.g., year and population), making the data “longer” (more rows, fewer columns) and

easier to analyze.

Basic Usage

tva longer [input_files...] --cols <columns> [options]

--cols/-c: Specifies which columns to reshape. You can use column names, indices ( 1-based), or ranges (e.g.,3-5,wk*).--names-to: The name of the new column that will store the original column headers ( default: “name”).--values-to: The name of the new column that will store the data values (default: “value”).

Examples

1. String Data in Column Names

Consider a dataset docs/data/relig_income.tsv where income brackets are spread across column

names:

religion <$10k $10-20k $20-30k

Agnostic 27 34 60

Atheist 12 27 37

Buddhist 27 21 30

To tidy this, we want to turn the income columns into a single income variable:

tva longer docs/data/relig_income.tsv --cols 2-4 --names-to income --values-to count

Output:

religion income count

Agnostic <$10k 27

Agnostic $10-20k 34

Agnostic $20-30k 60

...

2. Numeric Data in Column Names

The docs/data/billboard.tsv dataset records song rankings by week (wk1, wk2, etc.):

artist track wk1 wk2 wk3

2 Pac Baby Don't Cry 87 82 72

2Ge+her The Hardest Part 91 87 92

We can gather the week columns and strip the “wk” prefix to get a clean number:

tva longer docs/data/billboard.tsv --cols "wk*" --names-to week --values-to rank --names-prefix "wk" --values-drop-na

--names-prefix "wk": Removes “wk” from the start of the column names (e.g., “wk1” -> “1”).--values-drop-na: Drops rows where the rank is missing (empty).

Output:

artist track week rank

2 Pac Baby Don't Cry 1 87

2 Pac Baby Don't Cry 2 82

...

3. Many Variables in Column Names (Regex Extraction)

Sometimes column names contain multiple pieces of information. For example, in the

docs/data/who.tsv dataset, columns like new_sp_m014 encode:

new: new cases (constant)sp: diagnosis methodm: gender (m/f)014: age group (0-14)

country iso2 iso3 year new_sp_m014 new_sp_f014

Afghanistan AF AFG 1980 NA NA

We can use --names-pattern with a regular expression to extract these parts into multiple

columns:

tva longer docs/data/who.tsv --cols "new_*" --names-to diagnosis gender age --names-pattern "new_?(.*)_(.)(.*)"

--names-to: We provide 3 names for the 3 capture groups in the regex.--names-pattern: The regexnew_?(.*)_(.)(.*)captures:.*(diagnosis, e.g., “sp”).(gender, e.g., “m”).*(age, e.g., “014”)

Output:

country iso2 iso3 year diagnosis gender age value

Afghanistan AF AFG 1980 sp m 014 NA

...

4. Splitting Column Names with a Separator

If column names are consistently separated by a character, you can use --names-sep.

Consider a dataset docs/data/semester.tsv where columns represent year_semester:

student 2020_1 2020_2 2021_1

Alice 85 90 88

Bob 78 82 80

We can split the column names into two separate columns: year and semester.

tva longer docs/data/semester.tsv --cols 2-4 --names-to year semester --names-sep "_"

Output:

student year semester value

Alice 2020 1 85

Alice 2020 2 90

Alice 2021 1 88

Bob 2020 1 78

Bob 2020 2 82

Bob 2021 1 80

wider (Long to Wide)

The wider command is the inverse of longer. It spreads a key-value pair across multiple columns,

increasing the number of columns and decreasing the number of rows. This is useful for creating

summary tables or reshaping data for tools that expect a matrix-like format.

Basic Usage

tva wider [input_files...] --names-from <column> --values-from <column> [options]

--names-from: The column containing the new column names.--values-from: The column containing the new column values.--id-cols: (Optional) Columns that uniquely identify each row. If not specified, all columns exceptnames-fromandvalues-fromare used.--values-fill: (Optional) Value to use for missing cells (default: empty).--names-sort: (Optional) Sort the new column headers alphabetically.--op: (Optional) Aggregation operation (e.g.,sum,mean,count,last). Default:last.

Comparison: stats vs wider

| Feature | stats (Group By) | wider (Pivot) |

|---|---|---|

| Goal | Summarize to rows | Reshape to columns |

| Output | Long / Tall | Wide / Matrix |

Example 1: US Rent and Income

Consider the dataset docs/data/us_rent_income.tsv:

GEOID NAME variable estimate moe

01 Alabama income 24476 136

01 Alabama rent 747 3

02 Alaska income 32940 508

02 Alaska rent 1200 13

Here, variable contains the type of measurement (income or rent), and estimate contains the

value. To make this easier to compare, we can widen the data:

tva wider docs/data/us_rent_income.tsv --names-from variable --values-from estimate

Output:

GEOID NAME moe income rent

01 Alabama 136 24476

01 Alabama 3 747

02 Alaska 508 32940

02 Alaska 13 1200

...

Understanding ID Columns:

By default, wider uses all columns except names-from and values-from as ID columns. In this

example, GEOID, NAME, and moe are treated as IDs.

Because moe (margin of error) is different for the income row (136) and the rent row (3),

wider keeps them as separate rows to preserve data.

To explicitly specify that only GEOID and NAME identify a row (and drop moe):

tva wider docs/data/us_rent_income.tsv --names-from variable --values-from estimate --id-cols GEOID,NAME

Example 2: Capture-Recapture Data (Filling Missing Values)

The docs/data/fish_encounters.tsv dataset describes when fish were detected by monitoring

stations. Some fish are seen at some stations but not others.

fish station seen

4842 Release 1

4842 I80_1 1

4842 Lisbon 1

4843 Release 1

4843 I80_1 1

4844 Release 1

If we widen this by station, we will have missing values for stations where a fish wasn’t seen. We

can use --values-fill to fill these gaps with 0.

tva wider docs/data/fish_encounters.tsv --names-from station --values-from seen --values-fill 0

Output:

fish Release I80_1 Lisbon

4842 1 1 1

4843 1 1 0

4844 1 0 0

Without --values-fill 0, the missing cells would be empty strings (default).

Complex Reshaping: Longer then Wider

Sometimes data requires multiple steps to be fully tidy. A common pattern is to make data longer to fix column headers, and then wider to separate variables.

Consider the docs/data/world_bank_pop.tsv dataset (a subset):

country indicator 2000 2001

ABW SP.URB.TOTL 42444 43048

ABW SP.URB.GROW 1.18 1.41

AFG SP.URB.TOTL 4436311 4648139

AFG SP.URB.GROW 3.91 4.66

Here, years are in columns (needs longer) and variables are in the indicator column (needs

wider). We can pipe tva commands to solve this:

tva longer docs/data/world_bank_pop.tsv --cols 3-4 --names-to year --values-to value | \

tva wider --names-from indicator --values-from value

longer: Reshapes years (cols 3-4) intoyearandvalue.wider: Takes the stream, usesindicatorfor new column names, and fills them withvalue.countryandyearautomatically become ID columns.

Output:

country year SP.URB.TOTL SP.URB.GROW

ABW 2000 42444 1.18

ABW 2001 43048 1.41

AFG 2000 4436311 3.91

AFG 2001 4648139 4.66

Handling Duplicates (Aggregation)

When widening data, you might encounter multiple rows for the same ID and name combination.

tidyr: Often creates list-columns or requires an aggregation function (values_fn).tva: Supports aggregation via the--opargument.

By default (--op last), tva overwrites previous values with the last observed value.

However, you can specify an operation to aggregate these values, similar to values_fn in tidyr

or crosstab in datamash.

Supported operations: count, sum, mean, min, max, first, last, median, mode,

stdev, variance, etc.

Example: Summing values

Example using docs/data/warpbreaks.tsv:

wool tension breaks

A L 26

A L 30

A L 54

...

If we want to sum the breaks for each wool/tension pair:

tva wider docs/data/warpbreaks.tsv --names-from wool --values-from breaks --op sum

Output:

L 110 47

M 68 62

H 81 96

(For A-L: 26 + 30 + 54 = 110)

Example: Crosstab (Counting)

You can also use wider to create a frequency table (crosstab) by using --op count. In this case,

--values-from is optional. But to get a proper crosstab, you usually want to group by the other

factor (here, tension), so you should specify it as the ID column.

tva wider docs/data/warpbreaks.tsv --names-from wool --op count --id-cols tension

Output:

L 3 3

M 3 3

H 3 3

(Each combination appears 3 times in this dataset)

Comparison: stats vs wider (Aggregation)

Both tva stats (if available) and tva wider --op ... can aggregate data, but they produce

different structures:

| Feature | tva stats (Group By) | tva wider (Pivot) |

|---|---|---|

| Goal | Summarize data into rows | Reshape data into columns |

| Output Shape | Long / Tall | Wide / Matrix |

| Columns | Fixed (Group + Stat) | Dynamic (Values become Headers) |

| Best For | General summaries, reporting | Cross-tabulation, heatmaps |

Example: Data:

Group Category Value

A X 10

A Y 20

B X 30

B Y 40

tva stats (Sum by Group):

Group Sum_Value

A 30

B 70

(Retains vertical structure)

tva wider (Sum, name from Category):

Group X Y

A 10 20

B 30 40

(Spreads categories horizontally)

fill (Fill Missing Values)

The fill command fills missing values in selected columns using the previous non-missing value (

Last Observation Carried Forward, or LOCF) or a constant. This is common in time-series data or

reports where values are only listed when they change.

Basic Usage

tva fill [options]

--field/-f: Columns to fill.--direction: Currently onlydown(default) is supported.--value/-v: If provided, fills with this constant value instead of the previous value.--na: String to consider as missing (default: empty string).

Example: Filling Down

Input docs/data/pet_names.tsv:

Pet Name Age

Dog Rex 5

Spot 3

Cat Felix 2

Tom 4

To fill the Pet column downwards:

tva fill -H -f Pet docs/data/pet_names.tsv

Output:

Pet Name Age

Dog Rex 5

Dog Spot 3

Cat Felix 2

Cat Tom 4

Example: Filling with Constant

To replace missing values with “Unknown”:

tva fill -H -f Pet -v "Unknown" docs/data/pet_names.tsv

blank (Sparsify / Inverse Fill)

The blank command replaces repeated values in selected columns with an empty string (or a custom

placeholder). This is the inverse of fill and is useful for creating human-readable reports where

repeated group labels are visually redundant.

Basic Usage

tva blank [options]

--field/-f: Columns to blank.--ignore-case/-i: Ignore case when comparing values.

Example

Input docs/data/blank_example.tsv:

Group Item

A 1

A 2

B 1

Command:

tva blank -H -f Group docs/data/blank_example.tsv

Output:

Group Item

A 1

2

B 1

transpose (Matrix Transpose)

The transpose command swaps the rows and columns of a TSV file. It reads the entire file into

memory and performs a matrix transposition.

Basic Usage

tva transpose [input_file] [options]

Notes

- Strict Mode:

transposeexpects a rectangular matrix. All rows must have the same number of columns as the first row. If the file is jagged (rows have different lengths), the command will fail with an error. - Memory Usage: Since it reads the whole file, be cautious with very large files.

Examples

Transpose a table

Transpose docs/data/relig_income.tsv:

tva transpose docs/data/relig_income.tsv

Output (first 5 lines):

religion Agnostic Atheist Buddhist

<$10k 27 12 27

$10-20k 34 27 21

$20-30k 60 37 30

$30-40k 81 25 34

Detailed Options

| Option | Description |

|---|---|

--cols <cols> | (Longer) Columns to reshape. Supports indices (1, 1-3), names (year), and wildcards (wk*). |

--names-to <names...> | (Longer) Name(s) for the new key column(s). |

--values-to <name> | (Longer) Name for the new value column. |

--names-prefix <str> | (Longer) String to remove from start of column names. |

--names-sep <str> | (Longer) Separator to split column names. |

--names-pattern <regex> | (Longer) Regex with capture groups for column names. |

--values-drop-na | (Longer) Drop rows where value is empty. |

--names-from <col> | (Wider) Column for new headers. |

--values-from <col> | (Wider) Column for new values. |

--id-cols <cols> | (Wider) Columns identifying rows. |

--values-fill <str> | (Wider) Fill value for missing cells. |

--names-sort | (Wider) Sort new column headers. |

--op <op> | (Wider) Aggregation operation (sum, mean, count, etc.). |

--field <cols> | (Fill/Blank) Columns to process. |

--direction <dir> | (Fill) Direction to fill (down is default). |

--value <val> | (Fill) Constant value to fill with. |

--na <str> | (Fill) String to treat as missing (default: empty). |

--ignore-case | (Blank) Ignore case when comparing values. |

Comparison with R tidyr

| Feature | tidyr::pivot_longer | tva longer |

|---|---|---|

| Basic pivoting | cols, names_to, values_to | Supported |

| Drop NAs | values_drop_na = TRUE | --values-drop-na |

| Prefix removal | names_prefix | --names-prefix |

| Separator split | names_sep | --names-sep |

| Regex extraction | names_pattern | --names-pattern |

| Feature | tidyr::pivot_wider | tva wider |

|---|---|---|

| Basic pivoting | names_from, values_from | Supported |

| ID columns | id_cols (default: all others) | --id-cols (default: all others) |

| Fill missing | values_fill | --values-fill |

| Sort columns | names_sort | --names-sort |

| Aggregation | values_fn | --op (sum, mean, count, etc.) |

| Multiple values | values_from = c(a, b) | Not supported (single column only) |

| Multiple names | names_from = c(a, b) | Not supported (single column only) |

| Implicit missing | names_expand, id_expand | Not supported |

TVA’s expr language

The expr language evaluates expressions (like spreadsheet formulas) to transform TSV data.

Quick Examples

# Basic arithmetic

tva expr -E '42 + 3.14'

# Output: 45.14

# String manipulation

tva expr -E '"hello" | upper()'

# Output: HELLO

# Using higher-order functions (list results expand to multiple columns)

tva expr -E "map([1,2,3,4,5], x => x * x)"

# Output: 1 4 9 16 25

Topics

Literals

Integer, float, string, boolean, null, and list literals.

42, 3.14, "hello", true, null, [1, 2, 3]

Column References

Use @ prefix to reference columns.

@1, @col_name, @"col name"

Variable Binding

Use as to bind values to variables.

@price * @qty as @total; @total * 1.1

Operators

Arithmetic, comparison, logical, and pipe operators.

+ - * / %, == != < >, and or, |

Function Calls

Prefix calls, pipe calls, and method calls.

trim(@name)

@name | trim() | upper()

@name.trim().upper()

Documentation Index

- Literals - Literal syntax and type system

- Variables - Column references and variable binding

- Operators - Operator precedence and details

- Functions - Complete function reference

- Syntax Guide - Complete syntax documentation

- Rosetta Code - Fun programs

Expr Commands

Comparing modes and other commands:

| Command | What it does | Input row | Output row |

|---|---|---|---|

expr/expr -m eval | Evaluate to new row | a, b | c |

extend/expr -m extend | Add new column(s) | a, b | a, b, c |

mutate/expr -m mutate | Modify column value | a, b | a, c |

expr -m skip-null | Skip null results | a, b | c or nothing |

expr -m filter | Keep or discard row | a, b | a, b or nothing |

filter | a, b | a, b or nothing | |

expr -E '[@b, @c]' | Select columns | a, b, c | b, c |

select | a, b, c | b, c | |

join | Join two tables | a, b and a, c | a, b, c |

Output Modes

The expr command supports five output modes controlled by the -m (or --mode) flag:

eval mode (default, -m eval or -m e)

Evaluates the expression and outputs only the result. The original row data is discarded.

# Simple arithmetic expression (no input needed)

tva expr -E "10 + 20"

# Evaluate expression with inline row data

tva expr -n "price,qty" -r "100,2" -E "@price * @qty"

# String manipulation with inline data

tva expr -n "name" -r " alice " -E '@name | trim() | upper()'

# Calculate from file data

tva expr -H -E "@price / @carat" docs/data/diamonds.tsv | tva slice -r 5

Use this mode when you want to compute new values without preserving the original columns.

extend mode (-m extend or -m a)

Evaluates the expression and appends the result as new column(s) to the original row.

# Add a single column

tva expr -H -m extend -E "@price / @carat as @price_per_carat" docs/data/diamonds.tsv | tva slice -r 5

# Add multiple columns using list expression

tva expr -H -m extend -E "[@price / @carat as @price_per_carat, @carat as @carat_rounded]" docs/data/diamonds.tsv | tva slice -r 5

Key behaviors:

- The original row is preserved

- Expression results are appended as new columns

- Header names come from

as @namebindings - List expressions create multiple new columns

mutate mode (-m mutate or -m u)

Modifies an existing column in place. The expression must include an as @column_name binding to

specify which column to modify.

# Modify price column in place

tva expr -H -m mutate -E "@price / @carat as @price" docs/data/diamonds.tsv | tva slice -r 5

Key behaviors:

- Only the specified column is modified

- All other columns and the header remain unchanged

- The

as @column_namebinding is required - Column name must exist in the input (numeric indices like

as @2are not supported)

skip-null mode (-m skip-null or -m s)

Evaluates the expression and outputs the result, but skips rows where the result is null.

# Keep rows where carat > 1 and cut is Premium and price < 3000

tva expr -H -m skip-null -E 'if(@carat > 1 and @cut eq q(Premium) and @price < 3000, @0, null)' docs/data/diamonds.tsv | tva slice -r 5

Key behaviors:

- Rows with null results are excluded from output

- Useful for filtering based on complex conditions

- Return

@0to preserve the original row, or any other value to output that value

filter mode (-m filter or -m f)

Evaluates a boolean expression and outputs the original row only when the expression is true.

# Filter with a simple condition

tva expr -H -m filter -E "@price > 10000" docs/data/diamonds.tsv | tva slice -r 5

# Filter with multiple conditions

tva expr -H -m filter -E '@carat > 1 and @cut eq q(Premium) and @price < 3000' docs/data/diamonds.tsv | tva slice -r 5

Key behaviors:

- The original row and header are preserved

- Row is output only if the expression evaluates to true

- Expression should return a boolean (non-zero numbers and non-empty strings are truthy)

- Similar to

tva filterbut allows complex expressions

Notes

- Performance: For simple filtering or column selection, use

tva filterortva selectinstead - they are ~2x faster. Usetva expronly when you need functions, complex expressions, or calculations. - Type conversion: No implicit type conversion - use explicit functions like

int(),float(),string() - String comparison: Uses

eq,ne,lt, etc. (not==,!=) - Pipe operator:

|passes left value as first argument to right function - Streaming: All expressions are evaluated per row during streaming

- Persistent variables: Variables starting with

__(e.g.,@__total) persist across rows, useful for running totals

Data Organization Documentation

This document explains how to use the data organization commands in tva: sort, reverse,

join, append, and split. These commands allow you to rearrange, combine, and split

your data.

Introduction

Data organization involves sorting rows, combining multiple datasets, or splitting data into multiple files. These operations are essential for data preparation and pipeline construction.

- Sorting & Reversing:

sort: Sorts rows based on one or more key fields.reverse: Reverses the order of lines (liketac), optionally keeping the header at the top.

- Combining:

join: Joins two files based on common keys.append: Concatenates multiple TSV files, handling headers correctly.

- Splitting:

split: Splits a file into multiple files (by size, key, or random).

sort (External Sort)

The sort command sorts the lines of a TSV file based on the values in specified columns. It

supports both lexicographic (string) and numeric sorting.

Basic Usage

tva sort [input_files...] [options]

--key/-k: Specify the field(s) to use as the sort key. You can use 1-based indices ( e.g.,1,2) or ranges (e.g.,2,4-5).--numeric/-n: Compare the key fields numerically instead of lexicographically.--reverse/-r: Reverse the sort result (descending order).

Examples

1. Sort by a single column (Lexicographic)

Sort docs/data/us_rent_income.tsv by the NAME column (column 2):

tva sort docs/data/us_rent_income.tsv -k 2

Output (first 5 lines):

01 Alabama income 24476 136

01 Alabama rent 747 3

02 Alaska income 32940 508

02 Alaska rent 1200 13

04 Arizona income 27517 148

2. Sort numerically

Sort docs/data/us_rent_income.tsv by the estimate column (column 4) numerically:

tva sort docs/data/us_rent_income.tsv -k 4 -n

Output (first 5 lines):

GEOID NAME variable estimate moe

05 Arkansas rent 709 5

01 Alabama rent 747 3

04 Arizona rent 972 4

02 Alaska rent 1200 13

3. Sort by multiple columns

Sort first by GEOID (column 1), then by NAME (column 2):

tva sort docs/data/us_rent_income.tsv -k 1,2

reverse (Reverse Lines)

The reverse command reverses the order of lines in the input. This is similar to the Unix tac

command but includes features specifically for tabular data, such as header preservation.

Basic Usage

tva reverse [input_files...] [options]

--header/-H: Treat the first line as a header and keep it at the top of the output.

Examples

Reverse a file keeping the header

Reverse docs/data/us_rent_income.tsv but keep the header line at the top:

tva reverse docs/data/us_rent_income.tsv --header

Output (first 5 lines):

GEOID NAME variable estimate moe

06 California rent 1358 3

06 California income 29454 109

05 Arkansas rent 709 5

05 Arkansas income 23789 165

join

Joins lines from a TSV data stream against a filter file using one or more key fields.

Examples

1. Join two files by a common key

Using docs/data/who.tsv (contains iso3) and docs/data/world_bank_pop.tsv (contains country

with ISO3 codes):

tva join -H --filter-file docs/data/who.tsv --key-fields iso3 --data-fields country docs/data/world_bank_pop.tsv

Output:

country indicator 2000 2001

AFG SP.URB.TOTL 4436311 4648139

AFG SP.URB.GROW 3.91 4.66

2. Append fields from the filter file

To add the year column from who.tsv to the output:

tva join -H --filter-file docs/data/who.tsv -k iso3 -d country --append-fields year docs/data/world_bank_pop.tsv

Output:

country indicator 2000 2001 year

AFG SP.URB.TOTL 4436311 4648139 1980

AFG SP.URB.GROW 3.91 4.66 1980

append

Concatenates TSV files with optional header awareness and source tracking.

Examples

1. Concatenate files with headers

When appending multiple files with headers, use -H to keep only the header from the first file:

tva append -H docs/data/world_bank_pop.tsv docs/data/world_bank_pop.tsv

Output:

country indicator 2000 2001

ABW SP.URB.TOTL 42444 43048

ABW SP.URB.GROW 1.18 1.41

AFG SP.URB.TOTL 4436311 4648139

AFG SP.URB.GROW 3.91 4.66

ABW SP.URB.TOTL 42444 43048

ABW SP.URB.GROW 1.18 1.41

AFG SP.URB.TOTL 4436311 4648139

AFG SP.URB.GROW 3.91 4.66

2. Track source file

Add a column indicating the source file:

tva append -H --track-source docs/data/world_bank_pop.tsv

Output:

file country indicator 2000 2001

world_bank_pop ABW SP.URB.TOTL 42444 43048

world_bank_pop ABW SP.URB.GROW 1.18 1.41

...

split

Splits TSV rows into multiple output files.

Usage

Split file.tsv into multiple files with 1000 lines each:

tva split --lines-per-file 1000 --header-in-out file.tsv

This will create files like file_0001.tsv, file_0002.tsv, etc., each containing up to 1000 data

rows (plus the header in each file if --header-in-out is used).

Statistics Documentation

This document explains how to use the statistics and summary commands in tva: stats, **bin

**, and uniq. These commands allow you to summarize data, discretize values, and deduplicate

rows.

Introduction

stats: Calculates summary statistics (like sum, mean, max) for fields, optionally grouping by key fields.bin: Discretizes numeric values into bins (useful for histograms).uniq: Deduplicates rows based on a key, with options for equivalence classes and occurrence numbering.

stats (Summary Statistics)

The stats command calculates summary statistics for specified fields. It mimics the functionality

of tsv-summarize.

Basic Usage

tva stats [input_files...] [options]

Options

--header/-H: Treat the first line of each file as a header.--group-by/-g: Fields to group by (e.g.,1,1,2).--count/-c: Count the number of rows.--sum: Calculate sum of fields.--mean: Calculate mean of fields.--min: Calculate min of fields.--max: Calculate max of fields.--median: Calculate median of fields.--stdev: Calculate standard deviation of fields.--variance: Calculate variance of fields.--mad: Calculate median absolute deviation of fields.--first: Get the first value of fields.--last: Get the last value of fields.--unique: List unique values of fields (comma separated).--collapse: List all values of fields (comma separated).--rand: Pick a random value from fields.

Examples

1. Calculate basic stats for a column

Calculate the mean and max of the estimate column in docs/data/us_rent_income.tsv:

tva stats docs/data/us_rent_income.tsv --header --mean estimate --max estimate

Output:

estimate_mean estimate_max

14316.2 32940

2. Group by a column

Group by variable and calculate the mean of estimate:

tva stats docs/data/us_rent_income.tsv --header --group-by variable --mean estimate

Output:

variable estimate_mean

income 27635.2

rent 997.2

3. Count rows per group

Count the number of rows for each unique value in NAME:

tva stats docs/data/us_rent_income.tsv --header --group-by NAME --count

Output (first 5 lines):

NAME count

Alabama 2

Alaska 2

Arizona 2

Arkansas 2

bin (Discretize Values)

The bin command discretizes numeric values into bins. This is useful for creating histograms or

grouping continuous data.

Basic Usage

tva bin [input_files...] --width <width> --field <field> [options]

Options

--width/-w: Bin width (bucket size). Required.--field/-f: Field to bin (1-based index or name). Required.--min/-m: Alignment/Offset (bin start). Default: 0.0.--new-name: Append as new column with this name (instead of replacing).--header/-H: Input has header.

Notes

- Formula:

floor((value - min) / width) * width + min - Replaces the value in the target field with the bin start (lower bound) unless

--new-nameis used.

Examples

1. Bin a numeric column

Bin the breaks column in docs/data/warpbreaks.tsv with a width of 10:

tva bin docs/data/warpbreaks.tsv --header --width 10 --field breaks

Output (first 5 lines):

wool tension breaks

A L 20

A L 30

A L 50

A M 10

2. Bin with alignment

Bin the breaks column, aligning bins to start at 5:

tva bin docs/data/warpbreaks.tsv --header --width 10 --min 5 --field breaks

Output (first 5 lines):

wool tension breaks

A L 25

A L 25

A L 45

A M 15

3. Append bin as a new column

Bin the breaks column and append the result as breaks_bin:

tva bin docs/data/warpbreaks.tsv --header --width 10 --field breaks --new-name breaks_bin

Output (first 5 lines):

wool tension breaks breaks_bin

A L 26 20

A L 30 30

A L 54 50

A M 18 10

uniq (Deduplicate Rows)

The uniq command deduplicates rows of one or more TSV files without sorting. It uses a hash set to

track unique keys.

Basic Usage

tva uniq [input_files...] [options]

Options

--fields/-f: TSV fields (1-based) to use as dedup key.--header/-H: Treat the first line of each input as a header.--ignore-case/-i: Ignore case when comparing keys.--repeated/-r: Output only lines that are repeated based on the key.--at-least/-a: Output only lines that are repeated at least INT times.--max/-m: Max number of each unique key to output (zero is ignored).--equiv/-e: Append equivalence class IDs rather than only uniq entries.--number/-z: Append occurrence numbers for each key.

Examples

1. Deduplicate whole rows

tva uniq docs/data/us_rent_income.tsv --header

Output (first 5 lines):

GEOID NAME variable estimate moe

01 Alabama income 24476 136

01 Alabama rent 747 3

02 Alaska income 32940 508

2. Deduplicate by a specific column

Deduplicate based on the NAME column:

tva uniq docs/data/us_rent_income.tsv --header -f NAME

Output (first 5 lines):

GEOID NAME variable estimate moe

01 Alabama income 24476 136

02 Alaska income 32940 508

04 Arizona income 27517 148

05 Arkansas income 23789 165

3. Output repeated lines only

Output lines where the NAME column appears more than once:

tva uniq docs/data/us_rent_income.tsv --header -f NAME --repeated

Output (first 5 lines):

GEOID NAME variable estimate moe

01 Alabama rent 747 3

02 Alaska rent 1200 13

04 Arizona rent 972 4

05 Arkansas rent 709 5

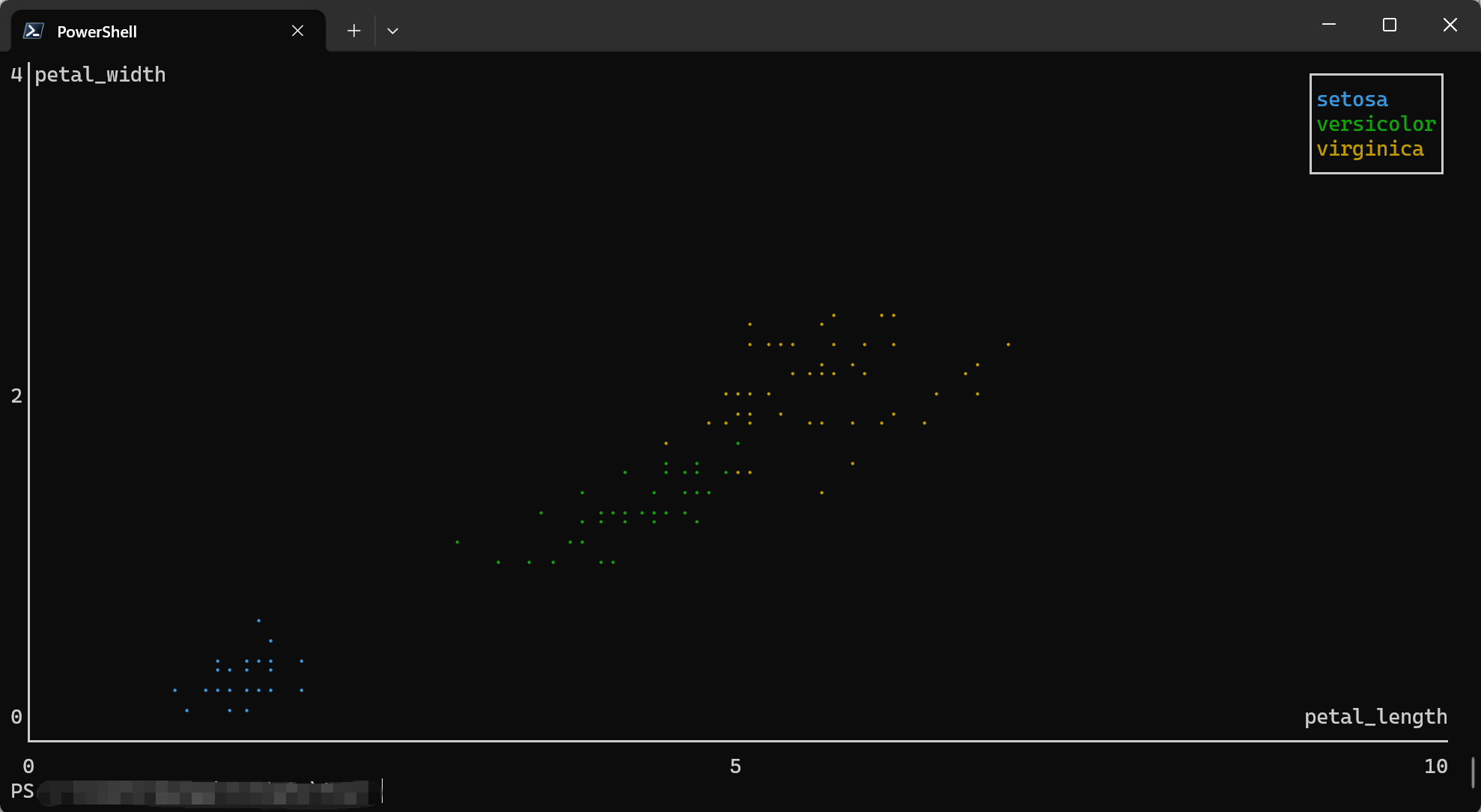

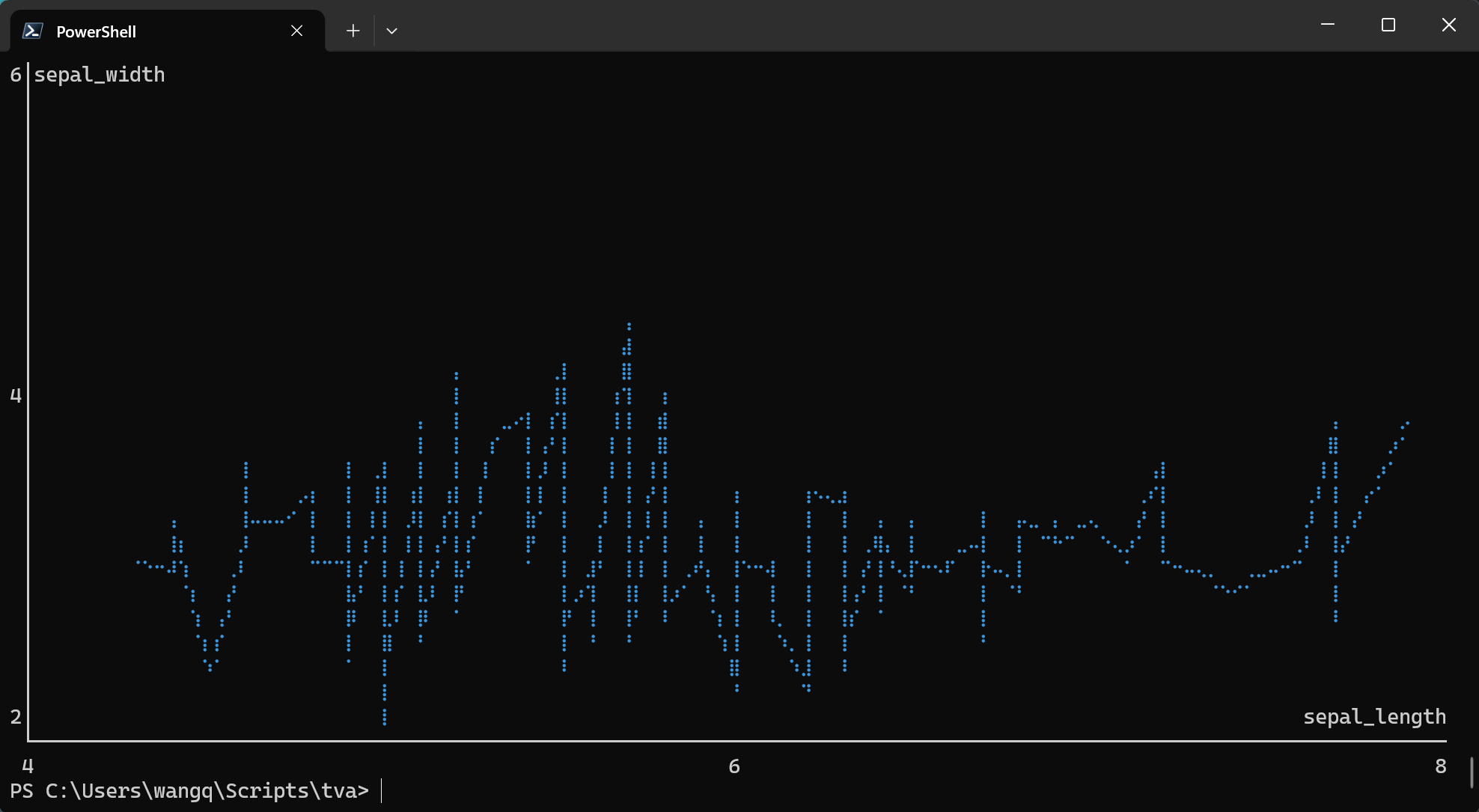

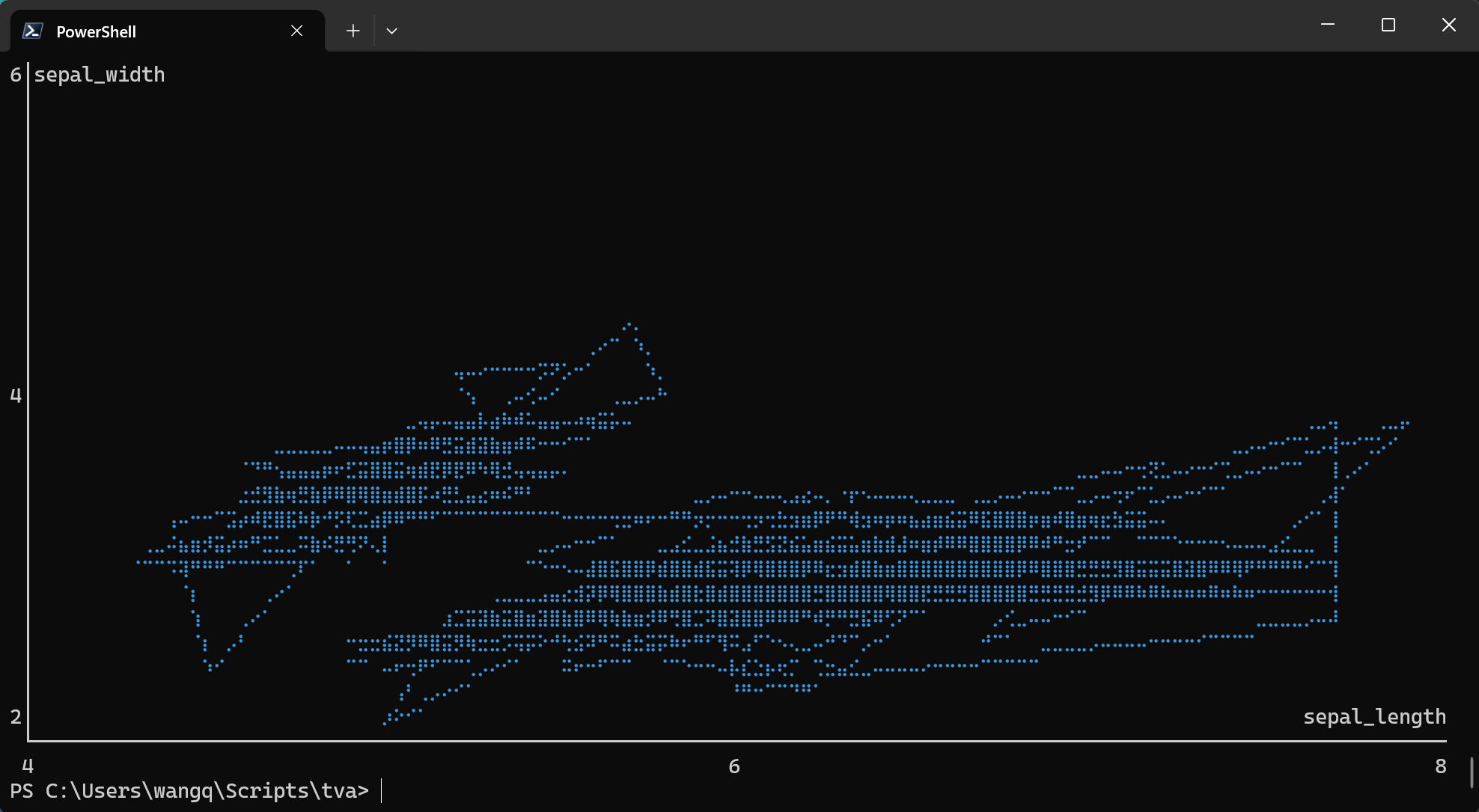

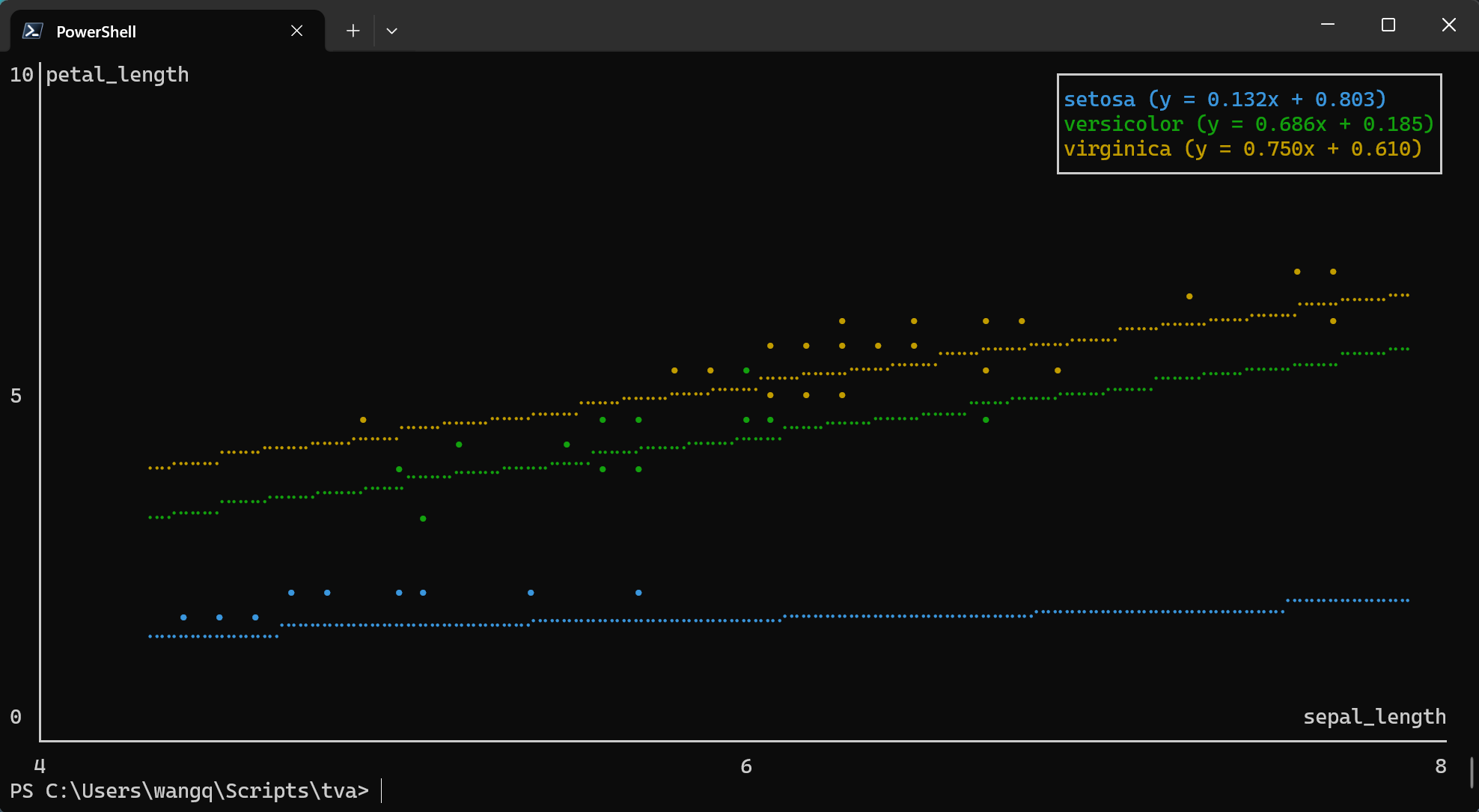

Plotting Documentation

This document explains how to use the plotting commands in tva: plot point. These commands

bring data visualization capabilities to the terminal, inspired by the grammar of graphics

philosophy of ggplot2.

Introduction

Terminal-based plotting allows you to quickly visualize data without leaving the command line. tva

provides plotting tools that render directly in your terminal using ASCII/Unicode characters:

plot point: Draws scatter plots or line charts from TSV data.plot box: Draws box plots (box-and-whisker plots) from TSV data.

plot point (Scatter Plots and Line Charts)

The plot point command creates scatter plots or line charts directly in your terminal. It maps TSV

columns to visual aesthetics (position, color) and renders the chart using ASCII/Unicode characters.

Basic Usage

tva plot point [input_file] --x <column> --y <column> [options]